Expose your SaaS to AI agents

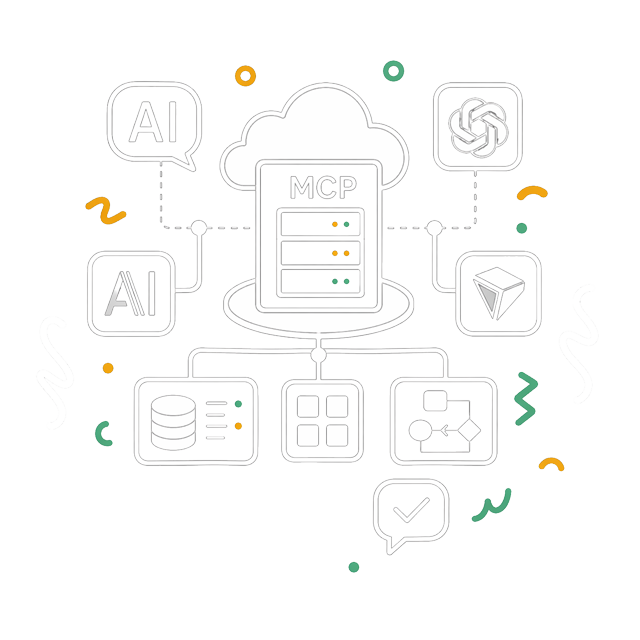

Your business data becomes queryable in natural language from Claude, ChatGPT, or Cursor.

Product Engineering • MCP Expertise

Your product becomes usable by Claude, ChatGPT, Cursor, and every MCP-compatible AI agent. Your data, features, and workflows, consulted directly inside a conversation with an AI, no rebuild required.

In 2012 you needed a mobile app, in 2016 a public API, and in 2026 it's the MCP server.

An MCP server is a distribution channel for AI. An AI assistant can answer your users' questions using your data and your SaaS tools. Without an MCP server, the AI responds with its generic data. With one, it queries your product and uses your real data.

The stake is to be present in the conversation your users have with their AI, instead of waiting for them to open your app.

The Model Context Protocol (MCP) is an open standard released by Anthropic in November 2024. An MCP server exposes tools, each with a name, a description, and parameters, that an AI agent reads, picks, and calls directly inside a conversation.

It's like a REST API, but the consumer is not a developer, it's a LLM. A classic API expects specific calls and parameters. An MCP server lets the AI read the list of tools and decide which one to invoke based on what the user asks.

Your data becomes cited and your users can consume you from Claude, Cursor or ChatGPT without ever opening your app.

Three scenarios where an MCP server changes the game for a product:

Your business data becomes queryable in natural language from Claude, ChatGPT, or Cursor.

Notes, specs, articles, documents: an AI agent connected via MCP summarizes, searches, and links them in natural language.

n8n, Claude, ChatGPT, or Cursor: every MCP client can automate tasks from your product without custom integration work.

From idea to deployed MCP server.

Which tools to expose, to which AI audience, for which critical data. Clarity on the capabilities that matter.

JSON schema, naming, descriptions written as prompts, LLM-actionable errors.

Stack tailored to the need: FastMCP in Python, the official TypeScript SDK, OAuth 2.1 for remote multi-user servers, with client registration adapted to the context.

Production rollout, real-call monitoring, description tuning based on observed agent behavior.

The technical building blocks I use, picked by project context:

An MCP server is not a copy of your internal API. The more tools you expose, the more the AI gets lost, burns tokens for nothing, and hallucinates off-context calls. The good rule is to start small and then increase.

On my two MCP servers in production, I deliberately created only 3 tools:

The rule: one tool = one clear responsibility. No duplicates, no "just-in-case".

The tool description is read by an LLM, not a human. It's a prompt. If it's vague, the AI will never call it, or worse, will call it incorrectly.

I run two MCP servers in production on my own SaaS:

A user asks Claude "audit the Google profile of Bakery X in Lyon" and gets a real report with Begonia's data, not a generic answer.

Begonia.proThe MCP is used to expose Markdown documents. The user can request « write an article for my blog on the topic XXXX by analyzing the writing style of my other articles ».

Fude.mdQuestions about MCP servers:

Whether you run an existing SaaS or just an idea worth exploring, let's talk. I help you pick the right tools, design a useful MCP server, and ship it cleanly.